Introduction

I very much hope that this avoids being a rant. I also hope it avoids being self-indulgently clever rather than being helpful. Finally, I understand that people learn in different ways; I think I like images and like to get the big picture before getting into detail. Other people like to be led along a path through all the detail, and others like words.

With all that out of the way(!) what am I trying to say? I am trying to point out the dangers of reductionism – reducing things down to details – in software development. Sometimes reductionism is the correct strategy, but it can be done too often, too much, too early and so on.

I understand the reductionist approach, and can see it happening often, not just in software development. I think that many technical disciplines have details that matter a lot, but software development is especially bad because compilers are picky and occasionally unhelpful. Instead of Word just giving you a squiggly underline, if your code has punctuation in the wrong place then it will not compile or will silently change its behaviour. As programmers and others involved in software development we get trained, informally and formally, by people and by our tools, to worry about details.

So, I agree that details matter, but often the stuff around the details doesn’t get its fair share of time and thought. That’s why it’s inappropriate reductionism that’s the problem, not the details themselves.

There are three areas in software development where I’ve noticed this problem:

- Coding

- Testing

- Documenting

I’ll describe the general problem a bit more, and then go through those areas.

General problem

Background

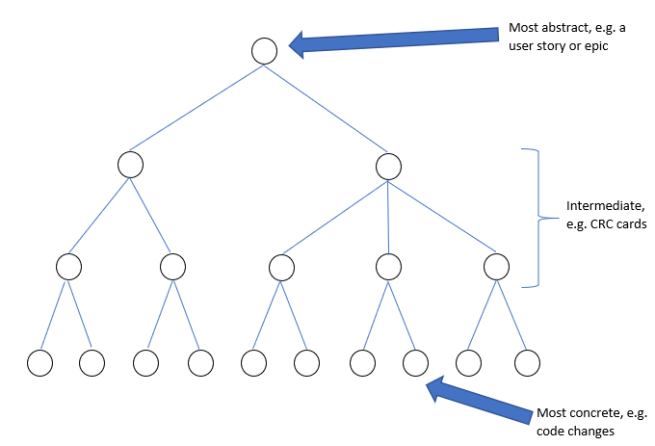

When coding, documenting or testing software we are doing something that takes a lot of thought. It’s a big and complicated task with lots of detail. You can summarise that detail into a smaller and smaller collection of things that are increasingly abstract.(Alternatively, you could think of it as a single abstract thing that gets broken down into increasingly many things that get more and more concrete.)

Problem

Given that the work breaks down into so many parts, of many different levels of abstraction, the problem is which parts of it do we do, and when?

The traditional waterfall approach is to do it like this:

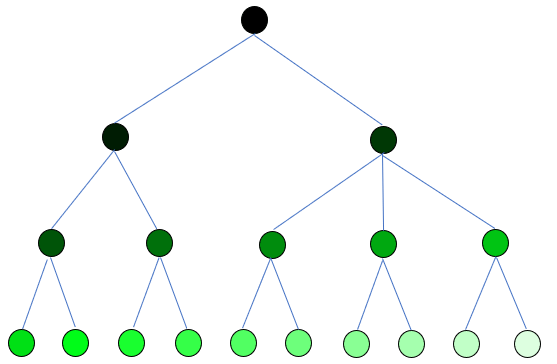

The blobs are done in the order darkest to lightest. In terms of tree traversal, this is breadth-first – you go along the breadth of one layer before starting on the next layer down.

In the Agile world this is referred to as Big Design Up Front (BDUF), and is to be avoided. It delays the point at which feedback can happen (as this tends to happen only via the most concrete layer e.g. running code). It also means that change has a high cost.

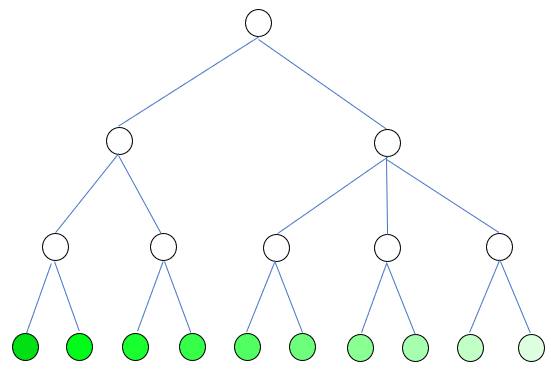

Unfortunately, a reaction to this can include throwing the baby out with the bath-water. Little to no thought at the intermediate levels of abstraction happens before detailed work (e.g. coding) starts:

This is inappropriate reductionism – too much focus on the details, too early.

Designing

In my experience, Test-Driven Development (TDD) can lead to inappropriate reductionism.

It can devolve into solving a series of small low-level problems:

- While not finished

- Get the next thing to do

- Write a test

- Write some code

I’m not saying that everyone works like that, but I think that it is a force that acts on everyone. There can be too little thinking at higher levels of abstraction.

The problem with doing this is that you can code yourself into a corner. Half an hour’s big-picture thinking can be well worth it in dead ends avoided (dead ends that have been coded, with tests nicely driving things and covering things). Note, I’m not advocating a return to BDUF, just Some Design Up Front.

People can get the Agile Manifesto wrong. X > Y doesn’t mean Y is worthless and should not be done. It just means that X is more important than Y. I think it’s possible to get TDD wrong too – I don’t think it should exclude design thinking, but rather designing should happen as it’s needed, in the appropriate amounts and expressed in the appropriate way.

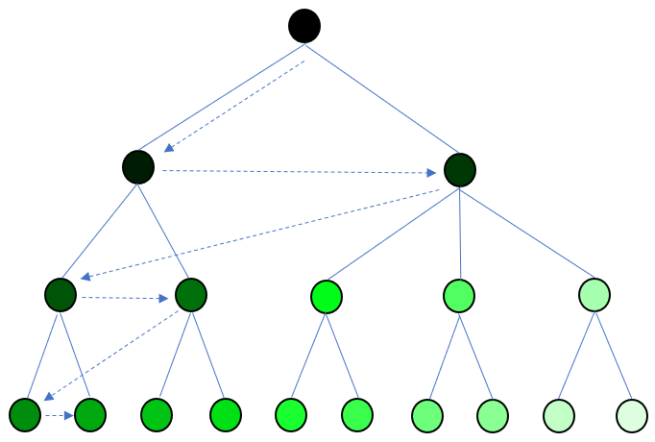

This could lead to a way of working along these lines:

The design is filled out gradually, both in terms of depth and breadth. I’m not sure what this kind of tree traversal is called, but the general approach is to do all immediate children of a node, and then move to the first child and repeat.

You are confident that the left hand blob of the second layer is correct, because you have also done the other blob in that layer, therefore at that level of abstraction you know that things fit together to make the whole (their parent in the layer above). Then, at the third layer, you only need to do the first two blobs in from the left before going to a more detailed layer, as they are all the parts of their parent in layer two, and you’re confident in the parent as I’ve previously described. And so on.

Concrete details are reached more quickly and with less cost than with BDUF, but there is more confidence than in the previous diagram that they will fit with other concrete details, because they are surrounded by the appropriate amount of design.

Testing

I think that programmers, including me, often have a skewed view of testing. It’s very easy to extrapolate (wrongly) from unit testing and assume that all testing can be broken down into a series of test cases, which can then be automated.

Michael Bolton is a testing guru, and so someone I listen to where it comes to testing. On the Ministry of Testing Slack channel he said:

We could say that a test case is a question you want to ask of the product. That leaves enormous scope for what a test case could address. Please, let’s not confuse test cases with testing. No other field that involves complex cognitive activity uses “cases” to structure its work; not management, programming, piloting, research, parenting…

A colleague of his, James Bach, co-authored an interesting paper saying that testing != test cases. It goes into much more depth and is really worth the read.

If you are a programmer like me (or a recruiter, manager, etc.) then there are all sorts of invalid assumptions and prejudices that the paper could dredge up. Testing done properly is an activity done by skilled people. It might be automated, it might entirely manual, or somewhere in between. It might have detailed test cases, it might have none, or it might assemble something on the fly from small pieces of structure.

It will think about users, risk, cost, value, and system behaviour cf user need. It is a much broader thing than merely sitting at a computer working through a list of test cases in a spreadsheet – it will respond to information about the system’s behaviour as the testing uncovers it.

While test cases can be useful, their over-importance can be a symptom of a wide-ranging problem that affects many things, like how people’s worth and performance is judged, who gets blamed for what and when, who gets to decide what and when, and so on.

They both say it much better than I could, so I will leave it at that – please read the paper.

Documentation

Documentation isn’t something that just professional technical authors do. There are programmer blogs (like this one), RFP responses, internal HowTos on an intranet etc. I think that everyone, even programmers, should know how to write.

You might be wondering why I have included documentation in this thing about reductionism. Too often authors seem to believe that technical writing can be reduced to emitting technical details. There might be some mistaken pride here – where the sub-text is look at all the technical detail I understand. I really disagree – I see it as an example of inefficiency, ineffectiveness, and a lack of empathy with the user (reader). Technical writing, like visualisations, should develop understanding in someone else’s head, usually to achieve an objective.

When I recently tried to learn about SAML, I got very frustrated. I wanted an introduction to the area, that wasn’t merely a diluted version of an in-depth technical discussion, but couldn’t find one. I wanted to know Why before I was told What and How. An introduction isn’t a watered-down substitute for the real thing – it’s scaffolding around the detail. The introduction gives you the higher-level things from the tree diagrams above, ready for the lower-level details to have a tidy home to hang from.

In the end I wrote the SAML introduction that I hoped I would have found.

Conclusion

There are several things in the current development world, such as automation, measurement and unit testing, that can lead you towards reductionism. Those things are good when done well, but inappropriate reductionism isn’t a price worth paying for them. Skipping higher-level parts of a task can often be a false economy.