This is the second part of my response to the Ministry of Testing’s latest blog challenge: What three things have helped you in your testing career? As I’m not a tester, I’m choosing to re-word this as: What three things have helped you in the quality aspects of your career as a programmer?

- Culture and people

- Thinking about feedback loops

In this article I’ll go into feedback loops a bit. This is feedback loops about an activity or a thing, rather than about a person as might involve their line manager. (This is very important, but outside the scope of this article.)

It might seem odd to go on about feedback loops as something that has helped my career, but I have only relatively realised that they crop up in so many environments that touch on quality. They aren’t the be all and end all, but because they seem to touch so many things it’s worth considering them for your situation and how they could be improved.

What we’re after and what we need to think about

We have a thing or activity that we want to be as good as reasonably possible, because it’s important to us in some way, so we’re trying to get some feedback about it. It’s worth thinking, at least a little, about the feedback:

- How can we increase the value to us of the feedback?

- How can we reduce the cost to us of getting the feedback?

- What might reduce the value or increase the cost?

This emerges in the questions of testability and observability – how good the information is that we can get about a system, and at what cost.

At the big end of the scale, we simply might not get the feedback at all. This is things like forgetting to run some tests for our code, or a poorly-configured build pipeline that doesn’t run them and it’s not obvious to us that they haven’t been run.

A long time ago, at the company I talked about in the previous article to do with culture, we had the problem of not running all the unit tests. At the time there were no suitable test runners, so my boss asked me to make one, which I called Bug Finder General. This went through several iterations over many years – the first was a simple shell script that searched for tests below a directory and ran them, but this turned into part of our overnight builds and sent reports to the relevant managers.

So, it’s worth thinking: how might I miss out on valuable feedback altogether? How can I avoid this? Would automation help?

Often feedback is more valuable the sooner it arrives after the thing that could trigger it. This is the motivation for building in small increments, chopping user stories as small as possible etc. It’s also why having someone with a critical eye review user stories early is a good idea. What important stuff has been missed? How could things go wrong? Is this consistent with other things? This isn’t testing in the sense of running tests against code, but it can avoid bugs before they’re even implemented in code.

Single stepping your code

I’ve written a longer article about single stepping your code (going through it line by line in a debugger). In my experience it’s a powerful feedback loop with bonus side-effects. You directly see the behaviour of your code, rather than inferring it from external inputs and outputs. You might be surprised when execution skips a line, or the code might behave correctly for the case you’re debugging, but its behaviour helps you to realise that it will go wrong in other circumstances.

The bonus side-effects include a selfish (and hence probably successful) motivation to write concise units of code that have only one reason to change. Given that you need to single step every line of every affected unit of code (e.g. every line of a 20 line method, even if you change only 1 of those lines), it’s in your own best interests to write concise and focussed code – it reduces the blast radius of a change and hence reduces the single stepping work you need to do.

Users as your only testers

Some people say that human testers shouldn’t be involved in the process of getting code in the hands of users. User feedback is the gold standard of feedback, as they’re the people who matter most, so I can see the attraction of this. The people I mentioned earlier believe that adding a human tester is an unnecessary delay before getting the best feedback.

However, I encourage you to think of the cost of getting that feedback. (I’m referring to costs on top of creating and maintaining the build pipeline and automated tests necessary to get code into user’s hands as soon as possible.) If the user likes the code, then great. If there are bugs in the code, the cost of the user finding this is unhappy users. Do this enough and the cost is reduced income as users go elsewhere.

You might say that this is the point of the automated tests, and I think that they are necessary but not sufficient. To illustrate this, I will use two examples I know – the websites of my bank and utility company.

The utility company has forms in its site that give you an error as soon as you enter a field. (These are the common style of errors – red text just underneath the field.) The form doesn’t distinguish between two cases:

- I haven’t finished with this field yet

- I’ve finished with this field and its contents are invalid

There could be automated tests for this such that if the field contains valid text there’s no error, and if the field contains invalid text there’s an error. Someone reviewing the tests might say that all bases are covered, so the automated tests give good feedback quickly. However, a human (such as a customer like me) using the code will get frustrated at being moaned at by the form unfairly – I haven’t had a chance to enter anything yet, so don’t hit me with an error message.

Similarly, my bank has a couple of different forms where you enter a string of digits. These are for things like account sort codes and multi-factor authentication codes. The string of digits is a fixed length in all cases, and there are two or more separate text fields to enter the next chunk of the digit string, rather than one text field to enter all of it. E.g. there are three fields, where each one is for the next two digits of a sort code.

In some cases, when you fill up one field by entering all the digits it expects, the site automatically moves the cursor to the next field so you can just keep typing. In other cases, you have to click or tab from one field to the next yourself. Both are fine on their own, and both behaviours can be easily tested via automated tests, but the combination (the inconsistency) is a problem. I keep forgetting which behaviour to expect for a given screen, and so either tab when I don’t need to (which means the later digits go who knows where) or I don’t tab when I should (which means the later digits overwrite earlier ones).

In both cases, the feedback from users is to do with emotion. I am frustrated by this code. No test or test coverage system would do as well as a human tester to detect the problem (either the bug itself, or missing tests that might have detected the bug), because code doesn’t do emotions. Users do, and I suggest you use stunt users (testers) to shield your real users from bad emotions your code might otherwise inflict on them.

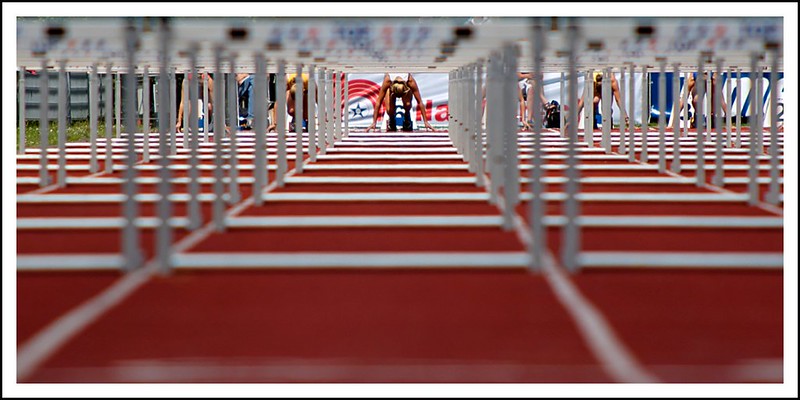

A series of hurdles rather than just one

This is about testing in the loosest sense, but it’s still valuable. For a couple of years, I worked in an R&D department that was alongside a much bigger traditional software development organisation. One of our jobs was to evaluate new ideas, such as new technologies or market opportunities, to see if they were worth the main software development organisation spending time on them.

There were loads of ideas, so the main issue was filtering them rather than generating them. One approach to seeing if an idea was worth throwing over the fence to development would be to go away for several weeks, think really hard, maybe develop some prototypes, and then do a big presentation at the end. I’ll describe this as the one-big-hurdle approach. The hurdle is the response by managers to the presentation, and preparing the idea for this hurdle is the work leading up to this point – the thinking, prototyping etc.

This certainly works, but might not be the most cost-effective approach. We used an alternative approach, that was more like a series of hurdles that start small and get taller. When an idea was picked up, it was allocated only a small amount of time to start with. The aim of this time wasn’t to allow for all the thinking, prototyping etc. from before. Instead, it was to just do a quick sanity check – shallow or otherwise incomplete thinking, no prototypes or maybe really basic ones etc.

At the end of this smaller amount of time, the relevant people would review the idea. It could be rejected at this point, or it could be allowed to continue. If it continued, then more time was spent in thinking, prototyping etc. (more than the first chunk of time). This was to develop the idea further, and there was another review at the end of that. Again, it could be rejected or allowed to continue.

This process could repeat for as many times as necessary. It resulted in several hurdles, each based on a relatively small increase in understanding from the previous state. It meant that there was no great ta-daa! moment when the results from many weeks’ work was presented. But it also meant that an idea was rejected as soon as it became clear it wasn’t going to work, rather than wasting time further developing a poor idea.

There are risks with this kind of approach:

- You reject an idea that further thought would reveal to be a good one;

- There is rework and other inefficiency caused by splitting the work up into many chunks.

The first one is addressed by reviewing how much time you allocate at each step, and what ideas you accept and reject. The second one is helped by thinking carefully about what work goes into what chunk.

This approach is useful in more directly test-related work too. If code fails sanity tests, that are quick to run and check only basic functionality, then there is usually little point in running more thorough but also more expensive tests. Arranging things like CI/CD pipelines such that expensive operations start only once cheaper operations have shown it’s worth proceeding with them can reduce wasted time and resources. In this kind of case it’s better to get a subset of feedback quickly, rather than waiting a long time for all feedback. (And this test has failed too, and this one, and this one…)

Summary

There are often many kinds of feedback loops available to help assess and improve the quality of code. Some involve people, others are automated. Some involve code, others involve artefacts relating to code such as requirements. I suggest that you look at the feedback loops at play for your code. Can they be improved: can the feedback become more valuable and/or cheaper to get? Are there loops missing?

UPDATE 5th June

Added summary and section on single stepping code